Bringing a website on top of Google search engine rankings is not an easy task. A lot of work goes through it to bring the website on top for high-traffic keywords. And when it does, these rankings bring a good amount of organic traffic from Google.

When you see a sudden drop in traffic on your website, it might be an indicator saying that the website's Google ranking dropped dramatically. To fix this immediately, we have written here a checklist to perform and check why this happened.

If you are not using any rank tracking tools for your website, we recommend you to read our post here: https://underwp.com/how-to-know-my-blog-rank-answered-using-free-tools/

Before you go fixing the ranking issue, make sure the rankings have actually dropped. Here's how to check them.

Check Google Rankings

1. Google Search Console

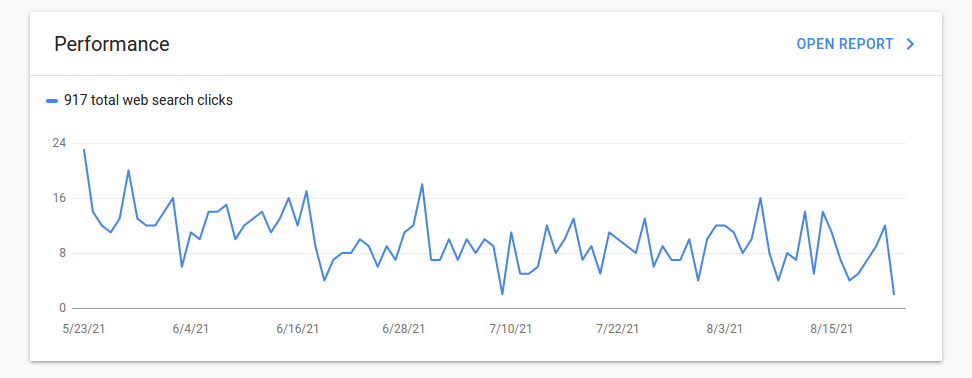

If you are using this free tool from Google to track your rankings, then it is time to check for any rank drops.

In the above image, you can see a gradual drop in rankings. This is seen over a period of 3 months when no SEO has been performed.

When you see a drop like this there is no need to worry about it. In this situation, the website owner has to put some effort into the SEO of the website or hire a good Digital Marketing company.

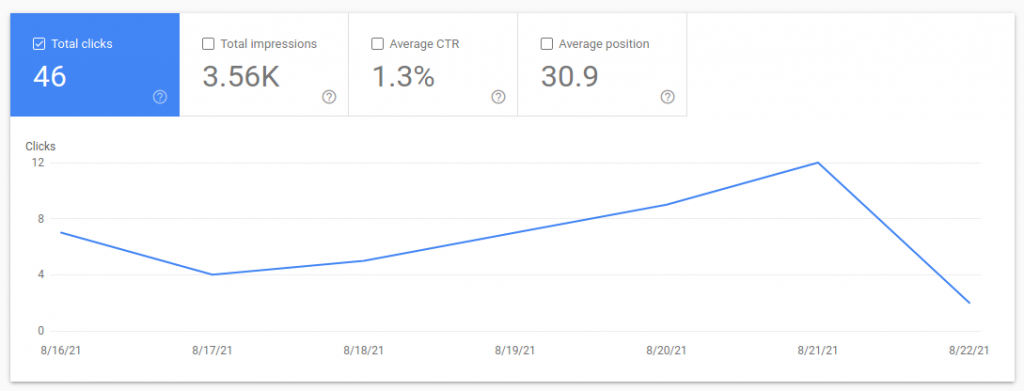

A sudden drop is not considered good. It looks like this as shown in the image below.

This sudden drop of clicks means less traffic to your website. When you see this in a week or two weeks follow the checklist we discussed later in this post.

2. SEMRUSH Analytics

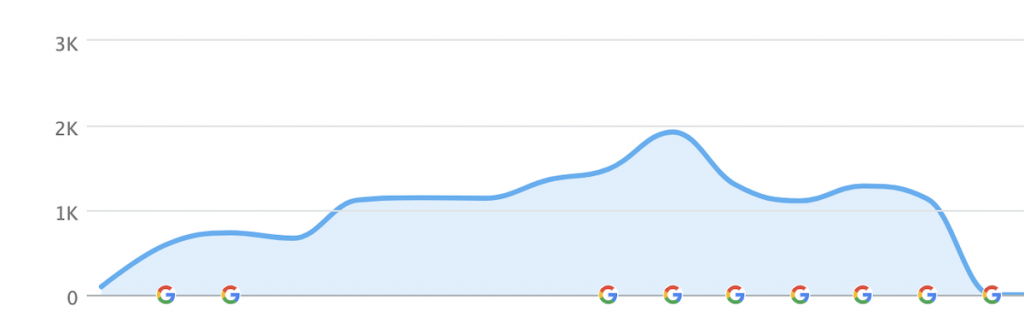

If you have added your website to SEMRUSH analytics too, then it is suggested to keep an eye on this too. Their free plan also has the option to add only one website and keep a track of rankings.

Just log in to its dashboard and go to Domain Overview in the left panel. On the right side, you will see two graphs showing Organic Traffic and Organic Keywords.

When your Organic Keywords are removed from Google, then you can see them here.

The only drawback of this analytics is that no less than 1 month time period can be seen here. A rank drop for 1 month, 6 months, or 1 year is only visible. You cannot customize the dates like Google search console.

The analytics of SEMRUSH is pretty good and it is more accurate than other tools. We recommend their paid version as it is a very reliable SEO tool.

Checklist To Fix The Dramatical Google Ranking Drop

Do not panic.

This is the first thing you need to do when the Google ranking drop dramatically. It can be fixed. Every google rank drop can be fixed with patience and some SEO work.

1. Google Updates

Yes, Google, like any software company updates its algorithm often. Every update has some new changes which affect a lot of sites. It is always a good practice to keep an eye on Google's blog for any update news: https://developers.google.com/search/blog

There are two ways that an algorithm update can affect your site's rankings. First, Google may have introduced a new algorithm (or a big upgrade to an older algorithm). Second, it could be one of the known algorithms' continual refreshes.

Not all Google updates are related to their algorithm. They can also alter the layout of their search engine result pages (SERPs) by including additional features that push your snippet down the page or attract more attention than your snippet.

Penalties Based on Algorithms

Google makes algorithm improvements from time to time, so it's important to keep watch of keyword rankings not just when they happen.

Recent ones (Core Web Vitals, Spam update, Panda, Penguin, and so forth) look for artificial links and poor content quality. Your organic traffic and search engine keyword ranks may suffer as a result of a large drop in your SERPs position.

Solution: Choose articles or copies that are in-depth, well-written, and have a high overall value (Panda). When it comes to unnatural links, the problem is caused by low-quality and spammy backlinks.

Google may also consider the rapid purchase of a large number of links suspicious. Wait for Google to recrawl your site or pages and your SERPs rank performance to improve after replacing low-quality content and eliminating harmful backlinks.

2. Duplicate Content

Duplicate material might be found on a single website or across multiple websites. Google only shows users the canonical version, also known as the master copy or source URL, when two URLs have substantially the same content or lead to the same page.

You tell Google which copies to display in the SEO keyword rankings when you use the canonical tag.

The trouble is, if you don't add the canonical tag to a page URL, Google will display what it considers to be the canonical version of the content. In certain cases, the website appearing in Google's search results isn't what you wanted to rank.

Solution: You have the option of removing the duplicate information. However, you should add the of the master copy and duplicate pages if you have multiple URLs, such as for certain items.

In both cases, the canonical tag is utilized as follows:

<link rel=”canonical” href=”https://example.com />3. Lack Of High-Quality Backlinks.

Keep an eye on your backlinks. They exist to improve your internet presence.

Backlinks, or links from other websites that point back to your pages, are a crucial Google ranking/local SEO ranking element, according to research. Having a credible site link to your page increases your trustworthiness, which helps Google decide how relevant your website is to a keyword or user inquiry.

Backlinks and external links, in summary, can affect your SERPs ranking. Your search result position is jeopardized if you have no, excessive, or spammy backlinks.

Solution: Tools like Ahrefs, Sitechecker, and SEMrush can help you track keyword ranks and audit your link profile. You can look at the sites that link to your pages, as well as their domain authority and anchor texts.

If a thin link profile is the source of your problem, learn how to strengthen your overall link building approach or include link-building into your online marketing efforts. Check out our SEO link building service where you can outsource your link building work to our team.

Remember that your link-building game must be fair to avoid the now-real-time Penguin and Core web vitals update in Google. Make careful to just go after links from relevant sites while building links to your site, and keep your anchor text varied.

Additionally, analyze your link profile on a regular basis to discover any suspicious links that come your way.

4. Check Noindex Tags

When you check keyword rankings and discover that some important pages aren't showing up in search results at all, you may have overlooked a “noindex” tag in the pages section.

This meta tag instructs Google or any other search engine web crawler to ignore a page when indexing it. Your pages will not be able to rank in the SERPs until they are crawled and indexed.

Let's have a look at some of the likely causes of this problem first. The “noindex” tag could be introduced to particular pages during development and then accidentally left there when they go live.

Some content management systems (CMS), such as WordPress, also offer a feature that prevents search engines from indexing sensitive information pages.

Solution: Remove the “noindex” tag from affected pages to improve their SEO rankings. Check the Coverage report in Google Search Console to see if the “noindex” problem is there.

If your website is relatively new and you don't have enough Search Console data, try using tools that crawl your site and show you which pages are affected by the Google search ranking issue.

5. The Website's Page Experience (Core Web Vitals)

The Core Web Vitals, which emphasize page load speed, visual stability, and interaction, are part of Google's current set of ranking signals, which include Page Experience.

In June 2021, the new SEO keyword ranking criteria went into effect. Setting Page Experience optimization in motion can take several weeks or more, and it takes about four weeks to see results.

Solution: There's no time to spare if you haven't audited your site or updated your SEO approach. For more information, see our Core Web Vitals SEO In 2021.

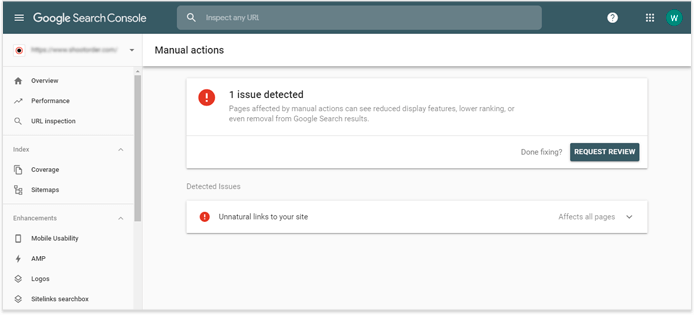

6. A Penalty Imposed By Search Engines Manually

When human reviewers at Google find that pages on your site do not comply with Google's webmaster quality requirements, they take manual steps.

The most typical reasons for manual Google penalties are: your site has been hacked; your site contains user-generated spam, unnatural backlinks, thin content, and cloaking; and your site has been hacked.

According to Google, you can learn more about each form of manual action and how to handle it here: https://support.google.com/webmasters/answer/9044175?hl=en&visit_id=637303954507623862-3586073394&rd=1

Manual penalties should be checked first if you see a significant reduction in ranks overnight (over 10 positions) for a large number of keywords. To find it go to the Security and Manual Actions > Manual Actions area of your Google Search Console account.

If the site has been hit with a manual penalty, a message will appear in the account, noting that the action was taken manually and explaining why. Google won't be as exact as you'd like, but it will give you a good indication of where to seek the problem.

It also specifies whether the action impacts the entire site or just specific pages or subdomains.

Solution: The first step is to figure out what caused the damage to your website. If there are any on-page concerns, you'll probably be able to figure out what caused them because you were probably aware of the risks when you tried that grey-hat or blackhat trick.

Off-page issues might arise as a result of both algorithmic updates and poor link-building strategies — or from the deliberate actions of someone who wants to undermine your rankings.

To need help with the Off-page or On-page SEO, search for the issue in our blog: https://underwp.com/blog/, and you should find a solution for it.

7. New Website Launched

When starting a new website some people may be puzzled by search engine keyword ranks or lack an SEO strategy for their brand-new website. It's important to keep in mind that ranking and reaching the top of the SERPs takes time. Allow Google to crawl your website from the minute it goes online.

Consider how elements such as content quality, user experience (UX), security, and even presence on Google My Business (GMB) listings affect when your SEO services kick in. It could take anywhere from a couple of months to a year.

Some pages may climb to the top of the SEO search rankings rapidly, while others may take longer.

Solution: The only solution to this is to register your website in Google Search Console and submit your sitemap. Sitemaps can be given in XML, Text and RSS, mRSS, and Atom 1.0.

From here on, you need to keep patience and wait for Google crawlers to start indexing and ranking your website.

8. Blocking Search Engine Bots

A text file called Robots.txt contains instructions for web crawlers like Googlebot. It either restricts or unrestricted access to your pages. If it contains a rule that prevents Googlebot from crawling specific pages, for example, the search engine will be unable to find those pages. This may have an impact on the ranking and visibility of SERPs.

Solution: Ensure that search engine bots have access to key pages, such as https://example.com/shop

If you're not sure where to look for robots.txt, here's how to enhance your Google Search ranking: Hire professionals. Because they understand the scope and restrictions of a robots.txt file, this should help you avoid serious technical SEO mistakes.

9. Content Without Search Intent

This is an important point to be remembered all the time when you write content for your website. You can write a lot of words in a post targeting the main keyword.

But the content of that article is not satisfying the intent of the user's search intent. It means the user didn't find the solution he/she was looking for.

Solution: Write content with a focus on keywords and search intent. A good article gives a complete solution to the user. Write with this intention and it should be good for Google bots.

Many writers always focus on the keywords and forget the user intent. Sometimes the user search intent is not just buying but to get information or learn about other user experiences.

One keyword can have many search intents, see your competitors and learn from them. We might think the user wants to buy a product but it might be that the user only wants to seek information.

Learning and writing about the keyword of an article with user search intent is very important. Always remember this point.

10. Hacked Website

Unfortunately, site hacking is a very lucrative industry. Hackers infect websites with dangerous code, spammy content, and links once they've gained access. This is quite damaging to your rankings, so make sure you haven't been hacked.

It's unlikely that your website has been hacked, but if it has, you need to know about it as soon as possible so that you can take steps to reclaim control and restore it to its former state.

Solution: Google looks for harmful code and activities on websites. If they discover that your website has been hacked, they will notify you via Google Search Console. While this check isn't foolproof, it only takes 10 seconds to check Google Search Console to see whether they're aware of a hack, thus we always advise doing so.

Log in to your Google Search Console account. Navigate to Security & Manual Actions > Security Issues. You can see any hacked issues here and rectify them as soon as possible.

Final Words

Google rankings are volatile in nature. They drop and jump up quite often. It is the fun part of SEO I will say.

But if you see Google ranking dropped dramatically, then make sure everything is right in place according to the checklist we wrote above. This is why you have to always keep an eye on Google rankings. Analyzing Google rankings regularly will help you find any drops immediately.

SEO is a long-term game you play with Google and other search engines. So it is always a better choice to hire a team who is well experienced in digital marketing and get you the results. An SEO team is always important in any company to avoid the drastic effects of the drop in Google ranking.

A good SEO team can help you recover and get back on your feet as soon as possible. Our team at UnderWP is having experience for more than a decade now to get proven results. We are always monitoring the rankings of our clients and fix issues like this immediately.

Noindex tags were an issue in my case. Thanks for reminding me of that.

Content quality is the most important factor for most websites. This is why many websites get affected with the Google algorithm updates. E-A-T is the most important factor every webmaster should think about.

As long as your website is providing good content and you aim to get EAT for your website, I think there is nothing to be worried about when Google core updates happen.

Keeping an eye on website analytics will tell you if your website server goes down or any errors on the website.

When the website goes down, it affects the rankings too. Also the website speed.